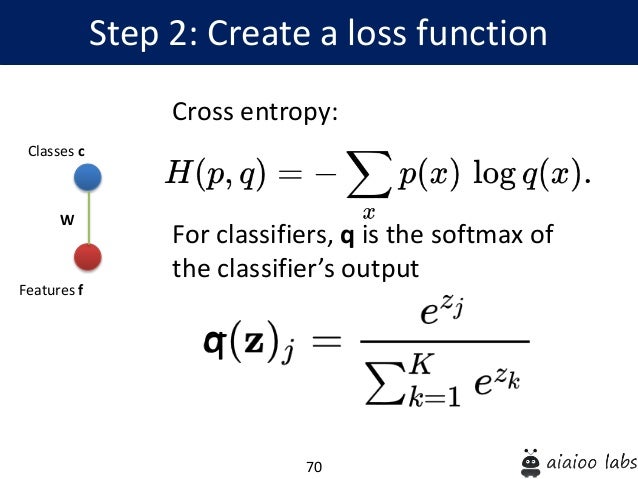

Import torch import torch.nn as nn # Define the model model = nn. #PYTORCH CROSS ENTROPY LOSS HOW TO#Here’s an example of how to use these options: For example, you can specify a weight tensor to assign different weights to different classes, or you can set the reduction argument to control how the loss is reduced over the batch. PyTorch also provides several options for customizing the behavior of the nn.CrossEntropyLoss function. We pass the input data x through the model to obtain the predicted output output, and then compute the cross entropy loss by passing output and the true labels y to the criterion function. Next, we generate some random data consisting of 64 examples with 10 input features and binary labels (0 or 1). We also define the cross entropy loss function using nn.CrossEntropyLoss(). In this example, we define a simple linear model with 10 input features and 2 output classes. randint ( 0, 2, ( 64 ,)) # Compute the loss output = model ( x ) loss = criterion ( output, y ) CrossEntropyLoss () # Generate some random data x = torch. Linear ( 10, 2 ) # Define the loss function criterion = nn. PyTorch provides a built-in function called nn.CrossEntropyLoss that implements cross entropy as a loss function. Now that we’ve reviewed the concept of cross entropy, let’s dive into the PyTorch implementation. The predicted output is a tensor of size (batch_size, num_classes), and the true target is a tensor of size (batch_size). In PyTorch, cross entropy is implemented as a loss function that takes two arguments: the predicted output and the true target. ŷ is the predicted probability distribution.y is the true probability distribution (one-hot vector).The predicted probability distribution is the output of the model, which is a probability value for each class.Ĭross entropy measures the difference between these two probability distributions. The true probability distribution is a one-hot vector, where the value is 1 if the image is a cat and 0 if it’s a dog (or vice versa). The model predicts the probability of each image being a cat or a dog.

In machine learning, it’s often used to measure the difference between the predicted probability distribution and the true probability distribution.įor example, let’s say we’re training a machine learning model to classify images of cats and dogs. Cross entropy is a measure of the difference between two probability distributions. What is Cross Entropy?īefore we dive into the PyTorch implementation, let’s review what cross entropy is and why it’s useful. In this post, we’ll dive into the technical details of cross entropy in PyTorch, a popular deep learning framework. Cross entropy is particularly useful in machine learning tasks that involve classification, such as image recognition or natural language processing. It’s a widely used loss function that measures the difference between two probability distributions. As a data scientist, you’re likely familiar with the concept of cross entropy.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed